The Philosophical Foundation

Let’s consider a day in the life of an AI-powered software engineer. He has a thought. A really good one. A proposal for a new microservice architecture. But he’s busy doing some other bit of work, so he quickly feeds a three-sentence prompt into a tool like an AI agent and tells it to make it professional. The AI agent dutifully expands his three sentences into a 2,000-word, multi-page memo. It’s filled with techno-speak, perfect headers. It even has synergistic adjectives. After a quick review, he’s satisfied, and sends it to his lead. This all took about 10 minutes, and he gets back to his other work.

His lead is also an AI-powered software engineer. She’s also busy reviewing code and making sure her team is on top of things. She sees her colleague’s idea and her first thought: sigh, a wall of text! Thankfully, her email has a helpful AI-powered “Summarize” function. The AI bot takes those 2000 words and, within minutes, boils them down into…

Three bullet points that look almost exactly like the original prompt, and while not exact, captured the gist.

That is weird!

Here’s another:

A person is laid off from their job, and suddenly becomes a job seeker. He got good terms, so he has some runway to find another job. He starts putting together his resume, and he remembers that AI can probably generate one from scratch. He moves over from an empty Google Doc to ChatGPT (Gemini nowhere to be found because Google isn’t a great product company), and then realizes there are AI tools that will create customized, targeted resumes given specific job openings. So he scours Indeed for some leads, marks the one he likes, then links his Indeed and WeMakeResumes.ai (not a real company) accounts, and with some simple inputs (education, job history) and the click of a button, he fires off 12 different applications, with 12 targeted resumes and fully AI-generated cover letters. He’s feeling good. For now.

Let’s now move to one of the recruiters of one of the targeted companies. She logs in and is ready to look at some resumes. Five of the resumes are in the queue. She has her own AI tools that will help her match up these five that ended up on her desk with the client’s needs, to further whittle down the candidates to two. The recruiter reads these two resumes carefully, and finds one of them great, but one of them not so great. She keeps the good one, discards the bad one, and adjusts her AI tooling to improve the whittling. While she’s at it, she runs the filter one more time. Which filter, you might ask yourself? The first one that ran. I didn’t mention that one. That filter reviewed 395 resumes that had come in. And she ran the filter again, just to make sure it didn’t mistakenly filter out one she might want to schedule for an interview.

Our job seeker’s resume didn’t make the cut in either case. Nor did it make the cut in the other 11 companies, who were using similar workflows. In fact, in two of those companies, they don’t even bother with recruiters anymore, fully delegating to AI through interview scheduling.

That is weird!

In engineering terms, what I just described is akin to a no-op function. AI tools have created a glut of narrative creation. Words that are meant to further elucidate. But that sheer amount of new data that is being generated requires AI tools to sift through and understand. And now the same AI we leverage to create, we leverage to consume. And something weird has happened — we’re intentionally putting an AI filter through a lot of our communication, burning lots of resources in the process, and with very little new being added in terms of information.

But sadly that’s not the end of it. In fact, I would argue some wasted resources might be the least we should be concerned about.

Let me introduce you to Jean Baudrillard’s theory of Simulacra and Hyperreality.

Simulacra is, in essence, a copy of an original where the original is no longer available, and subsequently forgotten.

Baudrillard used the metaphor of “The Map and the Territory” to illustrate his point, and it goes something like this: Imagine a kingdom so obsessed with its own geography that it creates a map of its kingdom that is 1:1 scale and so detailed that it’s indistinguishable from the territory itself. Eventually, the citizens start living on the map. Why not? The map might offer some simple improvements here and there on what might be perceived as inadequacies or unfortunate drawbacks. Meanwhile the actual earth underneath is withering away. The map becomes “de facto” real in the sense that the people that live on the map treat it as reality. Meanwhile, over time, the original territory dies. The map replaces the original in collective memory to the point where the original is wiped out from history. Baudrillard calls living in this state “hyperreality,” a condition in which the distinction between reality and simulation has collapsed, and the resulting simulacra do not just represent reality, but replace it.

There are other examples we can use. There’s some low hanging fruit in the realm of social media — the carefully curated images and stories you see in Facebook and Instagram being a simulation of a desired real life that maybe doesn’t reflect the real life underneath it. But let’s go to some more basic ideas.

Take for example Pumpkin Pie-flavored creamer, which has no actual real Pumpkin in it. Those who use it can claim with some sincerity that they’ve “tasted Pumpkin.” Have they tasted Pumpkin, though, if there’s no actual Pumpkin in the product?

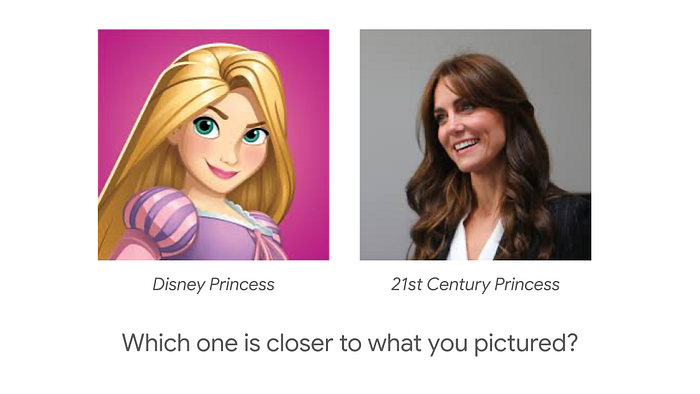

Here’s another example, one that I talked my kids through to some amusement as I prepared for this talk. Take a moment and picture in your mind a princess. Picture a princess in your mind and hold that picture.

Got it? Okay…

Of these two pictures of a princess, which one more closely matches what you thought?

You see, much in the same way, the 2,000-word AI-generated memo, the AI generated summary of said memo, the AI-generated resume, and the filtering system that chose which resumes to present to the recruiter are merely pointers to actual thought. The AI output isn’t an act of understanding; it’s a lossy amplification or compression of a simulated understanding.

You can maybe see where I’m going with this.

When the creator doesn’t fully inhabit the creation, and the consumer doesn’t fully inhabit the consumption, then the actual human intent and connection simply evaporates. In the words of Baudrillard, we are left with a “Hyperreality”: a world where our Discord and Slack channels, YouTube and TikTok feeds, and our GitHub repositories and Medium blogs are overflowing with content, and yet… No one is actually saying anything. And no one is actually listening.

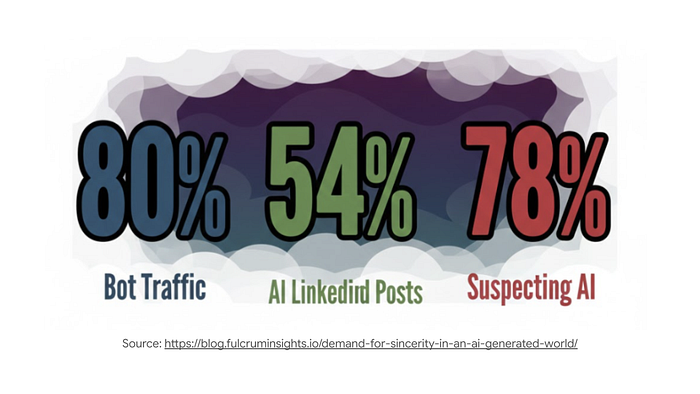

Some boring statistics. I’ll have a link later to the presentation where you can click through the sources.

- As much as 80% of all internet web traffic may be bots

- More than 80% of the recommendations you receive on social media are generated and determined by AI

- Up to 70% of all images you see on social media are generated, or altered by AI

- 78% of Americans suspect the content they see and read on the internet (including this presentation!) is written or generated by AI

- At least 54% of LinkedIn posts are estimated to be generated by AI

- On average, at least 20% of all general social media interactions are bots. And depending on the topics or time of year, the interactions may be as much as 80%

That feeling you have right now in your gut. The realization that maybe enhanced productivity isn’t the point. That, precisely, is why we AI feels weird.

I submit to you that this is a problem that we should be concerned about. And we might be the generation best suited to wrestle with it because we still vividly remember a world before AI was a mainstream capability.

Authentic existence requires a direct connection to the physical, biological, and historical world. Without this connection, the real behind the simulation starts to erode.

So what? Who cares?

Look, I get it. Simulations are cool. Simulations are kinder than reality. Simulations allow us an escape from disappointment, or the ability to create the ideal version of ourselves. I was an avid World of Warcraft video game player, where I would spend hours curating that perfect character to slay a dragon I would never encounter in real life. I have a friend who spent hours in a video game called The Sims decorating a house she will never own and curating a family she will never hug in real life. I have another friend that is an avid fan of simulation racing, at one point having invested serious money in the tools required to accurately simulate driving real cars on real tracks, including multi-hour Le Mans type races, from the comfort of their living room. Simulations can give us access to experience, and inspire confidence that otherwise would not be possible.

So what’s the harm, really?

To be clear, I don’t think there’s anything fundamentally wrong with simulations, specifically or generally speaking. If you play the Sims or party in a rave through VR, knock yourself out! And just the same, I think there are some risks we need to be aware of. There is a dark side to simulations. Specifically, a danger of losing touch with actual reality.

When AI is in the middle of communication, passivity and vulnerability to manipulation by those who control the AI is a very real thing. If people just passively take in all this fake stuff without thinking, they may allow powerful people and corporations to use these simulations to control what we want, what we think, and what we do. It encourages people to stop thinking for themselves.

There can be an erosion of identity, where we forget who we are. If reality is constantly shifting and malleable it becomes difficult to maintain a stable sense of self identity. There’s a risk our existence becomes purely performative, based on external simulations rather than your true self.

There can be a loss of language. That is already happening, with a term like “news” being near meaningless these days. Or “vaccine,” for that matter.

And of all the dangers, the one that inspired me to put together these ideas and share them with you today is the danger of losing meaning and authenticity. If the real is overtaken by simulations, when everything feels fake, it’s hard hard to find truth or meaning in anything. Without sincerity, how can we ever find that which is real? That’s a genuine question!

And so to bring it back, if you agree with me even somewhat, I ask you: In our pursuit to build a perfect, high-fidelity map of a human being, can we prevent losing humanity in the process?

Absolutely, of course. 😁. I believe we can. AI may be the end of life as we know it, but that doesn’t mean a lesser quality existence must inevitably follow. The key is in realizing that we are in control of our destiny, and there is a lot we can do to leverage the joys of AI while mitigating the downsides.

Practical Ways to Think About AI

I’ve put together some ideas, some practical things we can do to maximize the benefit while minimizing the dangers and drawbacks.

Favor Augmentation over Automation

In product design, we learn early on that friction is the enemy. Users want one-click magic. And one-click magic is one of the quickest ways to lose agency. If I click one button and a whole project is created for me, do I own that project? Maybe I own it, but I certainly don’t inhabit it. It wasn’t my thoughts and experience that created it.

Self-driving cars are cool. You can sit in the back, relax, and not think about driving. But when it comes to creativity, we need to keep thinking for ourselves. Let’s not think of AI in an abstract autonomous vehicle sort of way, where we’re in a position where we can fall asleep while the AI does all the creative work.

As an alternative, let’s think instead in terms of exoskeletons.

Think about an exoskeleton for a moment. It doesn’t move unless the human inside it moves first. It multiplies the human’s strength, while the intent, the direction, the force, the why comes entirely from the person inside. I think this is the right model, a better way to think about it.

What may this look like?

This may mean replacing a “Generate All” mindset with a”’Step-through Collaboration” mindset instead. Rather than AI generating it all, maybe it can generate an outline, you can fill in more details, then the AI can critique it, then you iterate on it some more. AI as a tool that helps you do what you do best, rather than AI as a tool that does what you do altogether.

Another thought is to have a heuristic for your daily work, where you’re cognizant of how much is done by the AI vs how much you contribute. Things the AI is great at are the things that have been done repeatedly over and over again, the data that it has trained on. Things the AI are predictably not going to be great at are the novel things. So boilerplate code, code solutions that adhere to a well known design pattern, etc… These things are what the AI will excel at. Lived experience, that one nuanced challenge you had 10 years ago that you see also applies today… These are the things you must still contribute.

That’s how I created this talk. I used AI for research, to help me sketch out an outline, to critique my viewpoints, and even rehearsed parts of the talk itself for feedback. But the ideas are mine. This is the collaboration and iterative design approach that amplifies what’s real, rather than eradicating it.

Intentionally Exercise Your Brain

Next, let’s address something that I think we don’t talk nearly enough about, and that’s mental decay.

The more the AI does, the less your brain has to do. That can be nice for a while — it is exhausting to think. But what happens long term?

We have a slang term for a related habit of mindlessly scrolling through content: Brain rot. A mental decline from consuming excessive, low-quality internet content, leading to reduced focus and critical thinking. It describes cognitive fatigue from junk content, affecting how people think, speak, and pay attention.

And Brain rot is increasingly no longer the exclusive territory of social media. As our autocomplete technology in our IDEs get better, where whole functions are suggested, it’s easy to hit the tab key and move on. But are you processing what’s going on? Do you know why those three lines of code get the job done?

It’s not a leap to imagine that if we do this long enough, our own internal capacity to think withers. We become “prompt-driven” rather than “logic driven.” Blink, and now you depend on the tool way more than you anticipated.

So the challenge here is simple. Periodically turn it off!

When you hit a truly complex bug, or when you’re designing a new module from scratch, close the AI pane. Solve it with a white-board, a notebook, or just your own thoughts. Think of it like training with a weighted vest; you need to grapple with the problem to keep your mental muscles sharp.

Proof of Thought

Okay, this next idea is a little rough in my own head, but I want to get it out there because I think it’s interesting and possibly a good bootstrap into solving something I consider a problem.

Here’s the problem. Before the age of LLMs, writing a ten-page technical spec or a thoughtful long-form essay was, in itself, a form of proof of work. Unless it’s plagiarized, we can faithfully assume that the effort it took to produce something was, in part, a function of its depth and detail. And that effort acts as a signal. Because there was effort in producing it, it’s probably worth it to consume it.

But today, AI has dropped the cost of production considerably. We are living through a hyper-inflation of creation. Words. Images. Even videos. If it takes me three seconds to generate a thousand words, and some really good sounding words at that, effort is no longer a function of depth and detail. Because it’s not clear what the effort was, the signal is gone. I’m not sure it’s worth my attention.

Okay, with that problem — how do we solve it? Well, using “Proof of Work” in blockchain as an inspiration, could we develop a similar “Proof of Thought” mechanism to secure human connection?

Let’s do a quick primer on Proof of Work first.

To prevent spam or fraud, networks like Bitcoin require miners to solve a massive, computationally expensive puzzle. These are what we call NP-class problems: they are incredibly hard to solve, but effortless for the network to verify. By burning real-world electricity and clock cycles, the miner proves they have skin in the game. In the world of crypto, the cost of the work is exactly what makes the ledger trustworthy. It’s a signal that can’t be faked.

Can we do something similar in a creative, cognitive space?

Just as Proof of Work secures a financial ledger by requiring energy, Proof of Thought could ensure cognitive engagement.

New tools might need to be built first with this idea in mind. I can imagine a UI where, before I hit send on an AI-generated reply, maybe the system highlights part of the reply, asks me to confirm if that’s what I meant to say, and forces me to confirm, or edit, that portion. Output from such a system could be trusted more readily, no?

I can think of another idea. Grammarly.com had a feature, may still have a feature, that can scan your text and tell you how much is novel vs how much can be found in other sources, with a percentage of how much of it might even be plagiarized. Could something like this exist, but could instead analyze for sincerity or novelty and provide space for the creator to

Perhaps we can imagine a system that detects if a document is, for lack of a better way to express this idea, 80% AI 20% human. Something like that. Or 70/30. And there is something threshold that the user can aim for.

I can also imagine how these ideas would be frustrating. AI speeds things up, and this idea Proof of Thought slows things down. The goal here isn’t to be a nuisance, or to artificially increase the cost of something that could be cheaper. The goal is to ensure the user inhabits the creation before it leaves their machine. It’s a gut-check. It’s a moment of pause that asks: “Am I actually saying something, or am I contributing to the noise?”

Again — I only present this to you as an idea. I actually don’t know how it could work. But it just seems intuitive to me that, in a reality where there’s just more stuff in the ether, how can we increase the signal and reduce the noise?

Honesty of Format

Think back to that 2,000-word memo I mentioned at the top. That wasn’t communication; it was semantic padding. It was an attempt to make an idea look strong through volume, as opposed to its intrinsic value. As engineers and product people, we need to realize that prose is the most expensive medium for a human to process.

Honesty of Format means that if the information you’re sharing is just a collection of data points, status updates, or logic, then let it stay as data. Don’t wrap it in a polite AI-generated paragraph. When you use AI to fluff up your stuff, you’re putting more effort on your recipient to get the message. Potentially worse, you’ve invited the recipient to use AI to summarize your message, potentially leading to miscommunication.

If it’s a report, give them a dashboard. If it’s a decision, give them a bulleted logic tree. Save prose for the moments that require humanity, like difficult conversation, a vision for the future, or a nuanced critique. When we stop using AI to simulate effort through word count, we start respecting the cognitive load of the recipient.

Increased Transparency

As engineers, we look at diffs a lot. When we look at a Pull Request, we don’t just look at the final state of the code; we look at the differences; the specific lines that changed, the choices that were made, and the intent behind those changes. We want to know what was there before and what the engineer actually did to improve it.

I wonder if there isn’t something similar we can do with AI created output. Like the Proof of Thought idea, I’m not quite certain how to achieve this goal, but I think it’s worth exploring because sincerity thrives in transparency.

Low hanging fruit examples of this are watermarks on AI generated content. This could be visible or it could be embedded metadata in the bytes of the output.

A more evolved idea: Imagine a heatmap in a collaborative doc or a code editor. Instead of just seeing code, a heatmap highlights the sections where a human intervened. This might be the places where a person overrode the AI’s suggestion, added a specific edge case, or injected a personal anecdote.

Think about the power of that in a code review. If I can see that 90% of a PR was boilerplate generated by an LLM, but the remaining 10% contains a clever workaround for a legacy bug, that might be a strong guide about where to focus my attention.

(And no, it’s not necessarily on the human contributions!)

By exposing these details, we stop treating AI as a mask that hides a lack of effort. In fact, quite the opposite! We treat it as a tool that can help bring out our intent all the more. It brings back the augmentation over automation idea to the fore.

It highlights the parts that only a human really should be doing, a human did! It turns the model inside out: Instead of the human disappearing into the AI, the AI becomes the canvas that makes the human’s contribution impossible to ignore.

The Sincerity Test

Finally, I want to leave you with a new kind of test. We have unit tests, integration tests, and smoke tests. I propose the Sincerity Test.

Before you ship your next AI-powered 15Five High Five, PR description, architectural spec, colleague feedback, or sensitive email, I want you to ask yourself one question:

“If I were the recipient, would I feel respected by this content, or would I feel like I’m being asked to process noise?”

When we send a 1,000-word, AI-polished status update that could have been a sentence, we aren’t being professional. We are being disrespectful. We are asking our colleagues to do the computation of reading something we didn’t have the sincerity to write.

Every piece of outgoing communication should have a specific, idiosyncratic insight that only you could have added. If you can’t find yourself in what you’re sending, don’t send it yet.

I used AI to write this presentation. But I didn’t run to AI, prompted it “You make me feel weird, why is that,” and memorized the output. It was iterative. I first thought to myself what I thought. I looked up models of insincerity, ran into several different models, and the Simulacra really hit home, so I ran with it. I thought about my own ideas for how we should be creating and consuming AI tools, thought about what we’re doing at Fifth Third Bank, and the product of that effort is this talk. I put together a first draft for a high level talk, and then changed things up. I asked AI for feedback, but kept the product my own.

Did I write this presentation any faster? For what it is, absolutely! But to be clear, I didn’t think of it in terms of “how do I create a similar quality product faster?”Instead, my attitude was, “how do I create a better quality product in a similar amount of total effort?” Hopefully, this has been enjoyable, if not thought-provoking.

Wrapping it up

We are living through a pivot point in history. Very soon, the world will be drowning in perfect content. It will be beautiful. It will be grammatically flawless. And it will likely be completely hollow, without meaning or purpose.

In that world, Sincerity is going to become the most valuable asset we have. Not our ability to prompt, but our ability to mean what we say and know what we build.

We can choose to be the people who just move about the map, as in the Simulacra, or we can be the people who still remember where the Earth is.

Let’s build things that matter. Let’s say things that we mean. And let’s make sure that when a machine speaks to a machine… there’s still a human somewhere in the loop, making sure a meaningful thought actually exists.

You don’t have to slow down to start building with AI.